What is TandemKV?

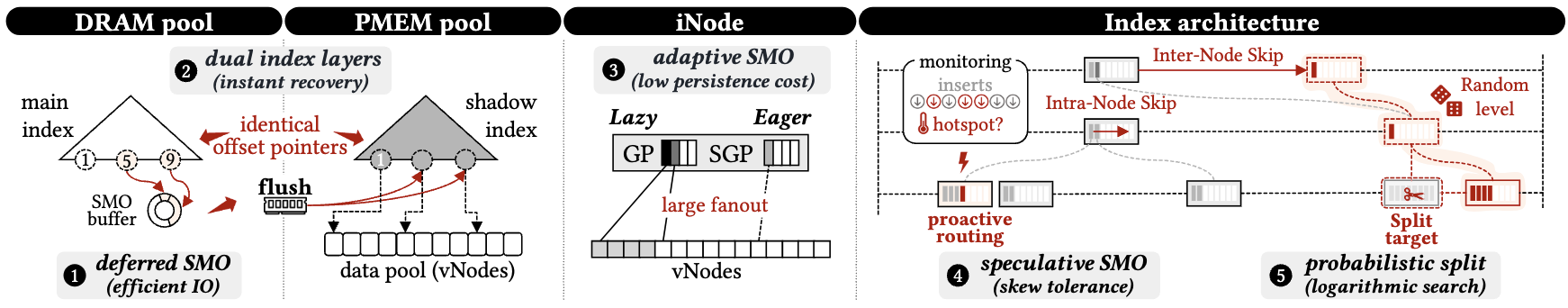

A write-efficient KV engine for heterogeneous DRAM–NVM memory that decouples index maintenance from durability. Structure modification operations (SMOs) execute on the DRAM main index first; durable reconciliation with the PMEM shadow index is a separate, runtime-controlled decision — governed by explicit thresholds rather than triggered on every write.

Core contributions

1New control boundary for durable indexing — SMOs need not be synchronous persistence tasks; structural durability is controlled independently by runtime signals

2Dual-index architecture — DRAM main index for foreground access + PMEM shadow index as durable recovery image, with symmetric offset-based addressing for crash-safe reuse

3Adaptive SMO policy — deferred SMOs amortize write cost under random inserts; speculative SMOs (SGPs) provision routing capacity proactively under skewed workloads

4Prototype on Intel Optane PMEM evaluated against Prism, FluidKV, ListDB, DPTree across the full YCSB suite under both uniform and Zipfian distributions

Key terminology

SMOStructure modification operation — node split, merge, or guidepost activation

iNodeInternal routing node in the DRAM main index

vNodeFixed-size append-only value node stored on PMEM

GPGuidepost — stable routing entry partitioning the key space within an iNode

SGPSpeculative guidepost — dormant placeholder pre-filled with predicted split keys

σPath-stability threshold — controls when deferred rebalancing fires in DRAM

δShadow-divergence threshold — controls when the PMEM shadow index must be checkpointed

mNoise-rejection margin — gates SGP allocation on predicted insert pressure

Architecture

TandemKV splits responsibility across a DRAM index that serves every foreground operation and a PMEM image that is updated only by background propagation. The design goal is visible in what does not happen on the critical path: no PMEM index writes, no fences, no pointer fix-up, no synchronous checkpoint.

DRAM-resident main index

Skiplist-style traversal combined with B⁺-tree-inspired wide nodes — Serves all foreground reads and writes. Absorbs all SMOs immediately. Holds iNodes (routing) with references to PMEM vNodes (data). Lock-free traversal with targeted iNode/vNode caching.

PMEM-resident shadow index

An identical offset-based mirror of the DRAM iNode pool acts as the durable restart image with every cross-object reference encoded as a pool index rather than a pointer. Updated only at async checkpoints; can be memory-mapped and reused with no swizzling.

Value storage — persistent vNode pool

A separate data pool of append-only vNodes holds the actual records (separate from the index); writes land in unordered arrays via non-temporal stores. Bloom filters and per-entry fingerprints compensate for the unordered layout. An adaptive size-bounded DRAM Bloom-filter cache promotes filters for hot vNodes in the background.

iNode [dual-guidepost abstraction]

Each iNode maintains two kinds of routing entries that separate stable state from speculative expansion:

GP Lazy path: stable routing entries created on-demand (real routing decisions), delta-logged, durable across checkpoints.

SGP Eager path: dormant placeholders pre-filled with predicted split keys, gated by an atomic visibility bitmap (zero lookup overhead while inactive). When an incoming split validates a predicted key, the SGP slot is linked — a single atomic fetch-or publishes it, replacing what would otherwise be a reactive GP creation. Predicted slots that aren't linked are discarded silently at next reorganization.

GP Lazy path: stable routing entries created on-demand (real routing decisions), delta-logged, durable across checkpoints.

SGP Eager path: dormant placeholders pre-filled with predicted split keys, gated by an atomic visibility bitmap (zero lookup overhead while inactive). When an incoming split validates a predicted key, the SGP slot is linked — a single atomic fetch-or publishes it, replacing what would otherwise be a reactive GP creation. Predicted slots that aren't linked are discarded silently at next reorganization.

Recovery

TandemKV prioritizes immediate availability over exhaustive reconstruction. The recovered index is structurally stale but semantically complete — all committed records remain reachable.

Recovery invariants

1Routing correctness — all traversal paths remain valid; every committed record is reachable from the recovered index

2Bounded search-path inflation — post-crash search depth exceeds the last checkpoint depth by at most δ

Stale-shadow mode (default)

Memory-maps the last checkpoint and immediately begins serving requests. SMOs recorded in the redo log after the last checkpoint are absent from the recovered index.

Recovery time: ~0s. Post-crash search initially degraded by at most δ.

When adaptive replay is enabled, a background thread monitors post-restart write activity. If writes are flowing, the index self-heals through splits and rebalancing. If no writes are detected for a configurable quiet period, the thread lazily replays the WAL in bounded chunks, refreshing the DRAM index incrementally until the log is drained and the thread exits.

Recovery time: ~0s. Post-crash search initially degraded by at most δ.

When adaptive replay is enabled, a background thread monitors post-restart write activity. If writes are flowing, the index self-heals through splits and rebalancing. If no writes are detected for a configurable quiet period, the thread lazily replays the WAL in bounded chunks, refreshing the DRAM index incrementally until the log is drained and the thread exits.

Log-replay mode

Before serving requests, replays the persistent SMO redo log to reconstruct the index state at the crash point. Longer restart time, better post-crash search quality.

Recovery time: 0.05s (δ≈1.01) to 1.65s (δ≈10.0). Preferred when post-crash latency matters more than restart time.

Recovery time: 0.05s (δ≈1.01) to 1.65s (δ≈10.0). Preferred when post-crash latency matters more than restart time.

Recovery procedure (stale-shadow)

// recoveryManager.cpp — RecoveryManager::recoveryOperation()

FUNCTION recoveryOperation()

superNode = pmemInodePool[MAX_NODES - 1] // persistent metadata sentinel

IF superNode.next != 0:

memcpyToDRAM(dramPool, pmemPool, sizeof(Inode) * last_index)

FOR EACH inode with odd seqlock version: // mid-write at crash time

bump version to next even value // unblock reader spin-waits

RETURN superNode.level

END FUNCTION

re-map PMEM pool→

copy shadow image → DRAM→

fix odd seqlocks→

reload runtime metadata→

serve requests

No pointer swizzling, no address translation, no index rebuild. Lightweight runtime metadata (skiplist height, node-allocation counters) is flushed periodically to PMEM and reloaded on restart via the

SuperNode sentinel at pmemInodePool[MAX_NODES - 1].WAL ring buffer

The SMO redo log is a persistent ring buffer on NVM managed by four atomic cursors:

a_consumed_startOldest entry not yet applied to pmem inodes — the replay frontier

a_alloc_endAllocation frontier — may contain partially written entries

a_produced_endFully written entries, ready for CLWB

a_durable_endFlushed and fence-ordered — safe to replay after crash

Cursor positions are persisted in a

CkptLogPersistMeta struct on NVM, guarded by a magic constant to distinguish valid recovery state from uninitialized memory. Two record types: FULL overwrites an entire pmem inode, DELTA patches individual GP slots in-place.// ckpt_log.cpp — replayLog()

FUNCTION replayLog(pmemInodePool)

consumed = a_consumed_start

durable = a_durable_end

IF consumed >= durable: RETURN // nothing to replay

FOR EACH record IN WAL[consumed .. durable):

IF record.type == FULL:

overwrite pmemInodePool[record.inode_id] // full snapshot

ELSE IF record.type == DELTA:

FOR EACH slot IN record.entries:

patch pmemInodePool[record.inode_id].gp[slot]

advance a_consumed_start

END FUNCTION

Adaptive replay (stale-shadow extension)

Writes naturally converge the index: each insert may trigger splits, GP creation, and rebalancing that overwrite the stale post-crash routing state. WAL replay is only necessary when writes stop flowing and the degraded DRAM index remains stuck.

// tandemIndex.cpp — adaptiveReplayThreadExec()

FUNCTION adaptiveReplayThread()

quiet_epochs = 0

WHILE WAL has pending entries:

sleep(SAMPLE_WINDOW)

writes = atomic_exchange(op_write_count, 0)

IF writes > 0:

quiet_epochs = 0 // index self-healing via live traffic

CONTINUE

quiet_epochs++

IF quiet_epochs < IDLE_EPOCHS:

CONTINUE

// No writes for IDLE_EPOCHS — index is stuck, replay a chunk

lazyReplayStep(WAL, BATCH_BYTES)

memcpyToDRAM(dramPool, pmemPool) // refresh DRAM so reads benefit

yield(LAZY_YIELD_US)

THREAD EXITS // WAL drained — no residual polling

END FUNCTION

SAMPLE_WINDOW_MS = 500Epoch length for write-activity sampling

IDLE_EPOCHS = 6Consecutive quiet epochs before lazy replay (default: 3 s)

LAZY_BATCH_BYTES = 256 KBMaximum bytes replayed per step

LAZY_YIELD_US = 200Inter-batch yield to limit foreground interference

Post-crash convergence

The same path-stability metrics used during normal execution continue to fire after recovery. Structural gaps from missing SMOs are repaired incrementally as live traffic triggers reactive and proactive SMOs — without any recovery-specific restructuring. Under typical deployments (δ = 1.1–1.5), the latency overhead is small and often negligible under read-dominated workloads. With adaptive replay enabled, write-active workloads self-heal while read-only workloads converge via background WAL drain within seconds.